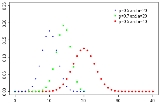

Binomial distribution

Overview

Probability theory

Probability theory is the branch of mathematics concerned with analysis of random phenomena. The central objects of probability theory are random variables, stochastic processes, and events: mathematical abstractions of non-deterministic events or measured quantities that may either be single...

and statistics

Statistics

Statistics is the study of the collection, organization, analysis, and interpretation of data. It deals with all aspects of this, including the planning of data collection in terms of the design of surveys and experiments....

, the binomial distribution is the discrete probability distribution of the number of successes in a sequence of n independent

Statistical independence

In probability theory, to say that two events are independent intuitively means that the occurrence of one event makes it neither more nor less probable that the other occurs...

yes/no experiments, each of which yields success with probability

Probability

Probability is ordinarily used to describe an attitude of mind towards some proposition of whose truth we arenot certain. The proposition of interest is usually of the form "Will a specific event occur?" The attitude of mind is of the form "How certain are we that the event will occur?" The...

p. Such a success/failure experiment is also called a Bernoulli experiment or Bernoulli trial

Bernoulli trial

In the theory of probability and statistics, a Bernoulli trial is an experiment whose outcome is random and can be either of two possible outcomes, "success" and "failure"....

; when n = 1, the binomial distribution is a Bernoulli distribution.